Next: Nonlinear Data (Curve Fitting) Up: Interpolation and Curve Fitting Previous: The Equation for a Contents

|

|

&

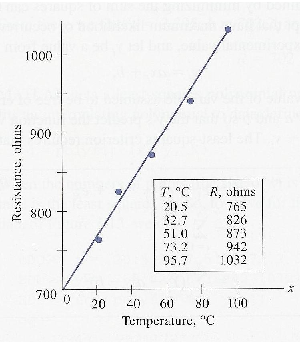

&  , can be obtained from the plot.

, can be obtained from the plot.

&

&  .

.

|

|

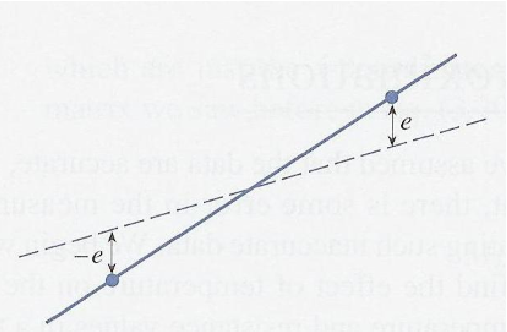

represent an experimental value, and let

represent an experimental value, and let  be a value from the equation

be a value from the equation

is a particular value of the variable assumed to be free of error.

is a particular value of the variable assumed to be free of error.

&

&  so that the

so that the  's predict the function values that correspond to

's predict the function values that correspond to  -values.

-values.

be a minimum.

be a minimum.

is the number of

is the number of  -pairs.

-pairs.

&

&  , so they are the variables of the problem.

, so they are the variables of the problem.

, the two partial derivatives will be zero.

, the two partial derivatives will be zero.

and

and  are data points unaffected by our choice our values for

are data points unaffected by our choice our values for  and

and  , we have

, we have

and expanding the summation, we get the so-called normal equations

and expanding the summation, we get the so-called normal equations

to

to  .

.

&

&  .

.

,

,  , and

, and